Answer: The one in the middle, because it does not fly

The Ludic Fallacy

In The Black Swan [i], author Nassim Nicholas Taleb describes seemingly implausible occurrences that are easy to explain after the fact. The classic is the black swan, assumed to be impossible by Europeans until one was discovered by explorers in Australia in 1697. In the book, Taleb defines the Ludic Fallacy as “the misuse of games to model real-life situations.” That is, “basing studies of chance on the narrow world of games and dice." Ludic is from the Latin, Ludus, a game or sport. And I agree that it is naïve to model complex phenomena like economies, weather, and cyber-attacks on such simple uncertainties, but …

The Ludic Fallacy Fallacy

I define the Ludic Fallacy Fallacy as “attempting to model real-life situations without understanding the narrow world of games and dice." These teach us truths about actual economies, weather, and cyber-attacks just as paper airplanes teach us truths about aerodynamic forces.

Radical Uncertainty

Radical Uncertainty: Decision-Making Beyond the Numbers by John Kay & Mervyn King [ii] is the Ludic Fallacy on steroids. It is a 500-page critique, not of “the narrow world of games and dice,” but of the narrow axiomatic approach to decision making under uncertainty, which has been widely adopted in economics, finance, and decision science. In keeping with Taleb, I will call this the Axiomatic Fallacy. Since my father, Leonard Jimmie Savage, was one of the founders of the approach, and proposed the pertinent axioms in his 1954 book, The Foundations of Statistics, I was eager to see what Kay and King had to say.

The Axiomatic Approach

My father framed the issue as follows:

The point of view under discussion may be symbolized by the proverb, “Look before you leap,” and the one to which it is opposed by the proverb, “You can cross that bridge when you come to it.”

Looking before leaping requires advanced planning in the face of uncertainty, for which my father sought a formal approach under idealized circumstances. Interestingly, Radical Uncertainty and my own book, The Flaw of Averages, both quote one of the same passages from my father’s work, in which he describes the application to practical problems:

It is even utterly beyond our power to plan a picnic or to play a game of chess according to this principle.

By this, my father meant that the axiomatic approach applied to making optimal choices only in what he called “small worlds,” in which you could enumerate all the bridges you might encounter along with the chances of encountering them. According to Kay and King, both my father and his Nobel Prize winning student, Harry Markowitz, who applied the theory to investments and invented Modern Portfolio Theory, were careful not to claim “large world” results. But the authors complain that for years, many economists and others have pushed the theory beyond its intended limits.

The book makes extensive use of the “small world” vs. “large world” motif. The authors blame the failures of macroeconomic models on “large world” radical uncertainties such as recessions, wars, technological breakthroughs, and things we have not dreamt of yet. These are the sorts of models that did NOT predict the personal computer revolution, recession of 2008, Brexit, Trump, etc. I myself would go further and argue that even in a perfectly deterministic world, many of the large models used in macroeconomics would collapse chaotically under their own weight due to their inherent non-linearity.

I agree that it is naïve to believe you can model “large worlds” in the same way that you can model “small worlds.” But that does not mean that small worlds are irrelevant. As the late energy economist Alan Manne said, “To get a big model to work, you must start with a little model that works, not a big model that doesn’t work.” Thus, to create an airliner, you are better off starting with a paper airplane than an attractive likeness made of plastic blocks.

My role model for bridging the “small world” of theory and the radical uncertainty of the “large world” is William J. Perry, former US Secretary of Defense. Here is a man with a Bachelors, Masters and PhD in Mathematics, who has nonetheless had a remarkably practical career devoted to preventing nuclear war. I once attended an after-dinner speech of his at which someone asked if he had ever built a mathematical model to solve a thorny problem while at the Pentagon. “No,” he responded, “There was never enough time or data to do that. But because of my training I think about things differently.” Amen. Some may see Radical Uncertainty as a refutation of probabilistic modeling. But I see it as an affirmation of Bill Perry’s approach of understanding probability and knowing when and when not to build a model.

The problem is that a book about unsuccessful mathematical modeling is a little like a book about bicycle crashes. If you don’t know how to ride a bicycle, you certainly won’t want to learn after reading about broken skulls, and you will not have learned about the joy and benefits of bicycles. If, on the other hand, you do ride, then you are already aware of the risks and rewards and are not likely to alter your behavior. In either case I believe the authors could have accomplished their goal in fewer than 500 pages.

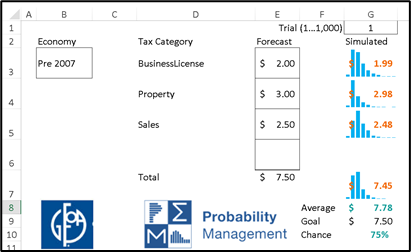

I share many of the authors’ misgivings about large models, and in fact, similar concerns motivated the creation of the discipline of probability management as I will discuss below. But first I want to address the non-modelers, who may wrongly take the book as a call to just talk about problems through “Narratives,” as suggested by the authors, instead of analyzing them.