By Sam L. Savage

Join us for a CEU course on Chance-Informed Thinking

Where?

The 94th MORS Symposium at the

When?

June 8-9, 2026

Why?

ProbabilityManagement.org has had a long-standing relationship with the Military Operations Research Society, MORS (see our page on Military Readiness). Phil Fahringer, a Lockheed Martin Fellow, has led a MORS Community of Practice in Probability Management, which is sponsoring this accredited course at the upcoming 94th MORS Symposium at the U.S. Air Force Academy in Colorado Springs. Faculty bios for the CEU course and additional presentations are below.

Who?

The course is led by five members of the board and management of the nonprofit, who have all contributed to developing or applying the Open SIPmath™ Stochastic Data Standard. They include Dr. Greg Parnell, former MORS President and author of the Handbook of Decision Analysis, which uses SIPmath, and Dr. Sam Savage, Executive Director of the nonprofit and author of The Flaw of Averages, and Chancification, which lay out the development and application of SIPmath.

What?

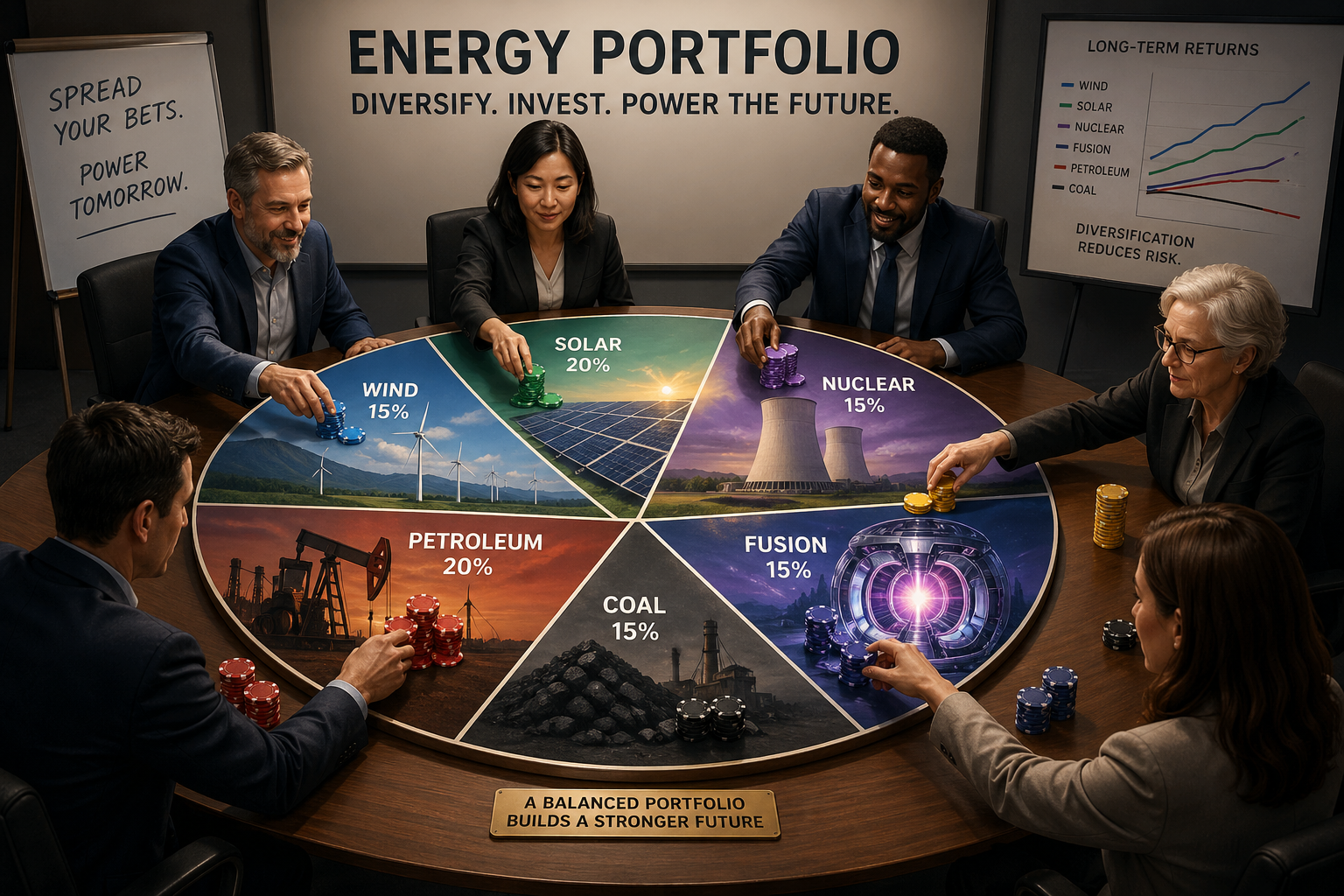

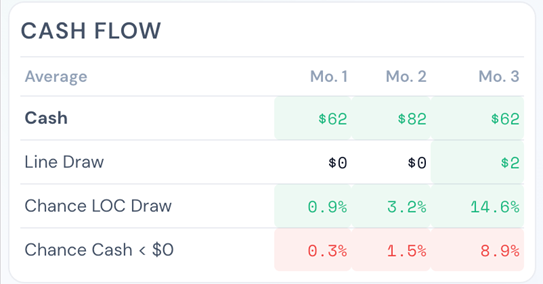

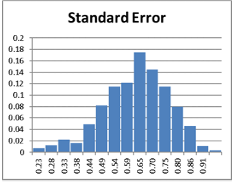

Much analysis of uncertainty in the military is based on Expected (Average) results. That is, in assigning weapons to targets, the objective is typically to maximize the expected degradation to the adversary. We suggest an alternative objective: to maximize the chance of winning the engagement.

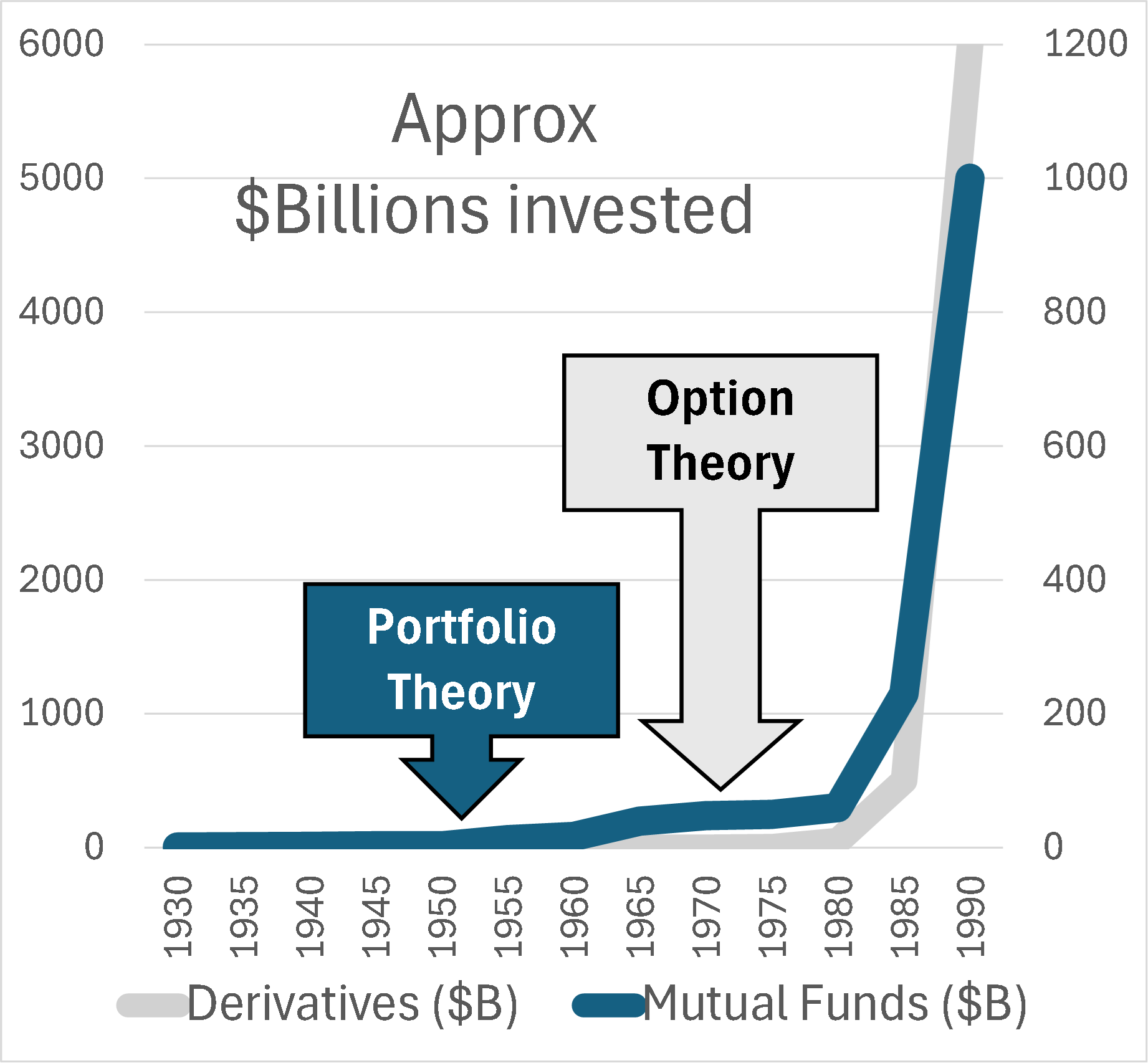

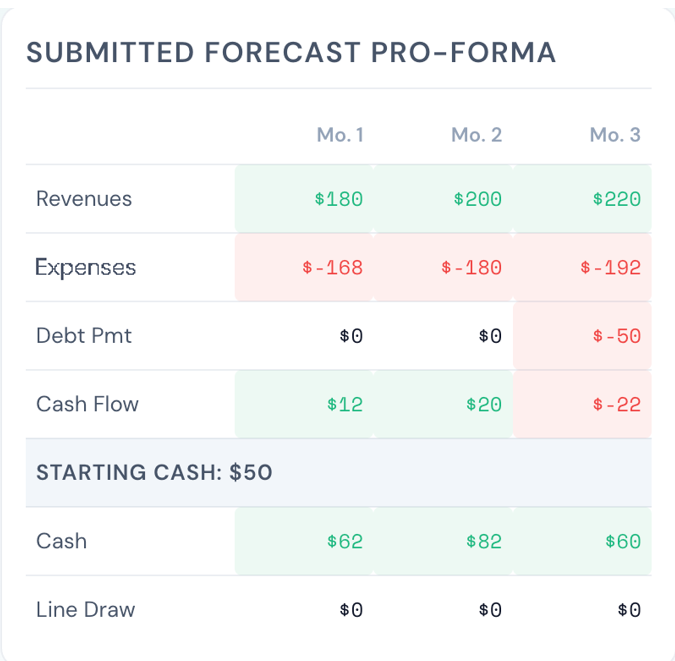

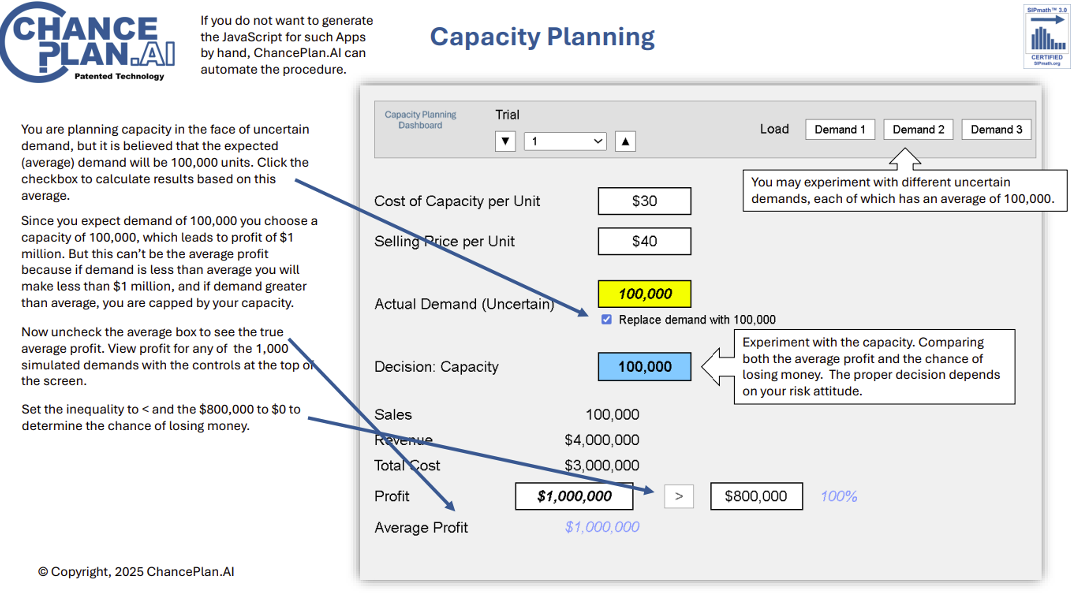

In the past, chance-informed thinking has required complex computer simulations. Now the Open SIPmath™ Standard from ProbabilityManagement.org can store “chances” in what is known as Stochastic Data.

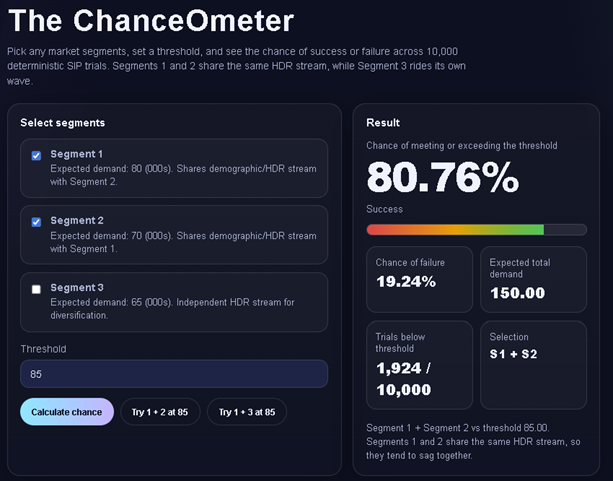

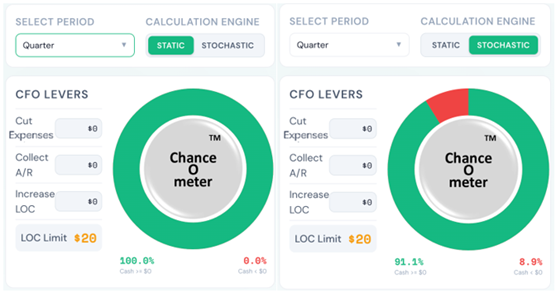

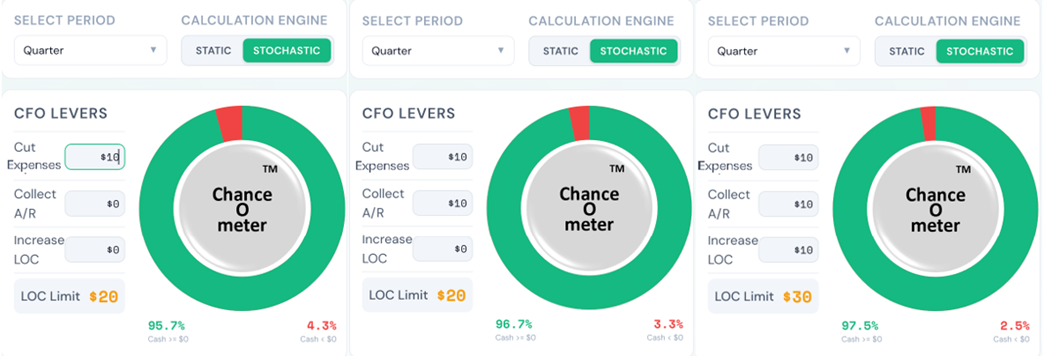

This course will show you how to use SIPmath data to create interactive chance-informed dashboards in anything from Excel to phone apps.

Connor McLemore, Chair of the nonprofit’s National Security Applications and I have just had an article accepted by Phalanx Magazine that shows how to use SIPmath data in the weapon targeting problem above and get results on your smart phone. Don’t believe us? Just scan the QR Code below and hit the Help button when you get there for more instructions.

If you want to play with more ChanceOmeters on your phone, visit my recent Blog.

If you want to learn how to build ChanceOmeters for yourself, come to the course!

https://chanceage.com/Targeting/

How?

Click here and go to the CEU Course where you can register and download the syllabus.

CEU Course Faculty Bios

Phil Fahringer

Strategic Modeling Engineer Fellow with Lockheed Martin. Over 37 years combined experience in the military and industry. Master’s degrees in operations research and strategic studies, from the US Naval Postgraduate School and the US Army War College respectively, and an undergraduate degree in Business Logistics from Pennsylvania State University. Leads Lockheed Martin Corporation Strategic Modeling and Decision Support Community of Practice and Co-chairs the Military Operations Research Society probability management Community of Practice. Active with ProbabilityManagement.org since 2013.

Dr. Karen Guttieri

Associate Director of ProbabilityManagement.org, specializing in national security. She brings experience across defense education and research institutions including the Army Cyber Institute at the U.S. Military Academy at West Point, Air University, and the Naval Postgraduate School. Her roles have included research leadership, curriculum development, faculty leadership, and cross-sector collaboration at the intersection of technology, security, and policy. She holds a Ph.D. in political science from the University of British Columbia and is affiliated with Janos LLC and Stanford University’s Center for International Security and Cooperation.

Connor McLemore

Principal Operations Research Analyst for CANA Advisors and Chair of National Security Applications at ProbabilityManagement.org since 2014. Former Naval Postgraduate School Military Assistant Professor and Operations Research Program Officer. Graduate of the United States Navy Fighter Weapons School (Topgun) with numerous operational deployments during 20 years of naval service. Has taught SIPmath at the Naval Postgraduate School and co-authored several articles and models displayed on the nonprofit’s Military Readiness page.

Dr. Greg Parnell

Professor of Practice in the Department of Industrial Engineering at the University of Arkansas. Research interests include decision and risk analysis and systems engineering. Co-editor of Decision Making for Systems Engineering and Management, (3rd Ed, 2022), lead author for the Handbook of Decision Analysis, (2013), and editor of Trade-off Analytics: Creating and Exploring the System Tradespace, (2017). He has a Ph.D. from Stanford University. Retired Air Force Colonel.

Dr. Sam Savage

Dr. Sam L. Savage is the Executive Director of ProbabilityManagement.org, author of The Flaw of Averages: Why We Underestimate Risk in the Face of Uncertainty (John Wiley & Sons, 2009, 2012) and Chancification: Fixing the Flaw of Averages (2022). He is the inventor of the Stochastic Information Packet (SIP), an auditable data array for conveying uncertainty, and an Adjunct in Civil and Environmental Engineering at Stanford University. He has a PhD from Yale University.

Additional Presentations at MORS

Don't miss these presentations featuring members of the Probability Management.org's board and management teams. Visit The MORS 94th Symposium for details (times below are MT).

Readiness is relative and qualitative, but it shouldn’t be, Phil Fahringer, Tuesday June 9, 2026 1:30 PM - 2:00 PM

Developing a Value Model using Microsoft CoPilot – Potential and Pitfalls, Dr. Gregory S. Parnell, Tuesday June 9, 2026 2:30 PM - 3:00 PM

Modeling Military Cargo Unmanned Systems for Contested, Distributed Logistics, Connor McLemore, Tuesday June 9, 2026 4:30 PM - 5:00 PM and Wednesday June 10, 2026 11:00 AM - 11:30 AM

Stochastic Data for Pre-Tactical Airspace Decision-Making, Dr. Sam L. Savage, Dr. Gregory S. Parnell, Colonel Brendan Cullinan, Dr. Richard Ham, Wednesday June 10, 2026 9:00 AM - 9:30 AM and Wednesday June 10, 2026 11:00 AM - 11:30 AM

Cyber Threshold Effects Require Stochastic Modeling, Dr. Karen Guttieri, Dr. Sam L. Savage, Thursday June 11, 2026 10:30 AM - 11:00 AM and Thursday June 11, 2026 2:30 PM - 3:00 PM

Copyright © 2026 Sam L. Savage